What is Decimal Scale Normalization ?

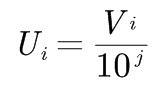

Decimal normalization is a method of normalization in which the given value is normalized by shifting the decimal points of that value. The number of decimal points to move is determined by the absolute maximum value of the given set of data. If Vi value of attribute A, then Ui is given as,

Where, j is the smallest integer such that max|Ui|<1.

Also Read:

- Data Pre-Processing

- Easy Explanation of Normalization with example

- Min-Max Normalization (with example and python code)

- Z Score Normalization(Standard score formula)

- What is F1-score and what is it’s importance in Machine learning?

Let’s clarify it with an example: Suppose we have data set in which the value ranges from -9900 to 9877. In this case, the maximum absolute value is 9900. So to perform decimal normalization, we divide each of the values into data set by 10000 i.e j=4.(since it is near to 9900).

To read more about normalization visit here.

Post a Comment

No Comments